Tesla has recently released the release notes for the Full Self-Driving (FSD) Beta V11.3, which is believed to be currently rolling out to the company’s employee FSD Beta testers. The electric vehicle community has been eagerly anticipating this update, as it reportedly brings some significant improvements to the FSD Beta software.

According to longtime FSD Beta testers, the new version brings several key improvements that would make the driving experience much smoother and closer to that of a human driver. These improvements include better handling in high-speed and high-curvature scenarios, as well as improved Automatic Emergency Braking (AEB) capabilities.

The feedback from these testers suggests that Tesla is still testing the new software with its employees before rolling it out to a wider audience of FSD Beta testers. However, based on the company’s previous update patterns, it’s likely that the V11.3 update will become available to the wider fleet in the coming weeks.

It’s worth noting, though, that there is a chance that V11.3 may not see a wider release, just like its predecessor V11, which was only made available to employees and not the wider FSD Beta testing group.

The following are Tesla’s FSD Beta V11.3 release notes:

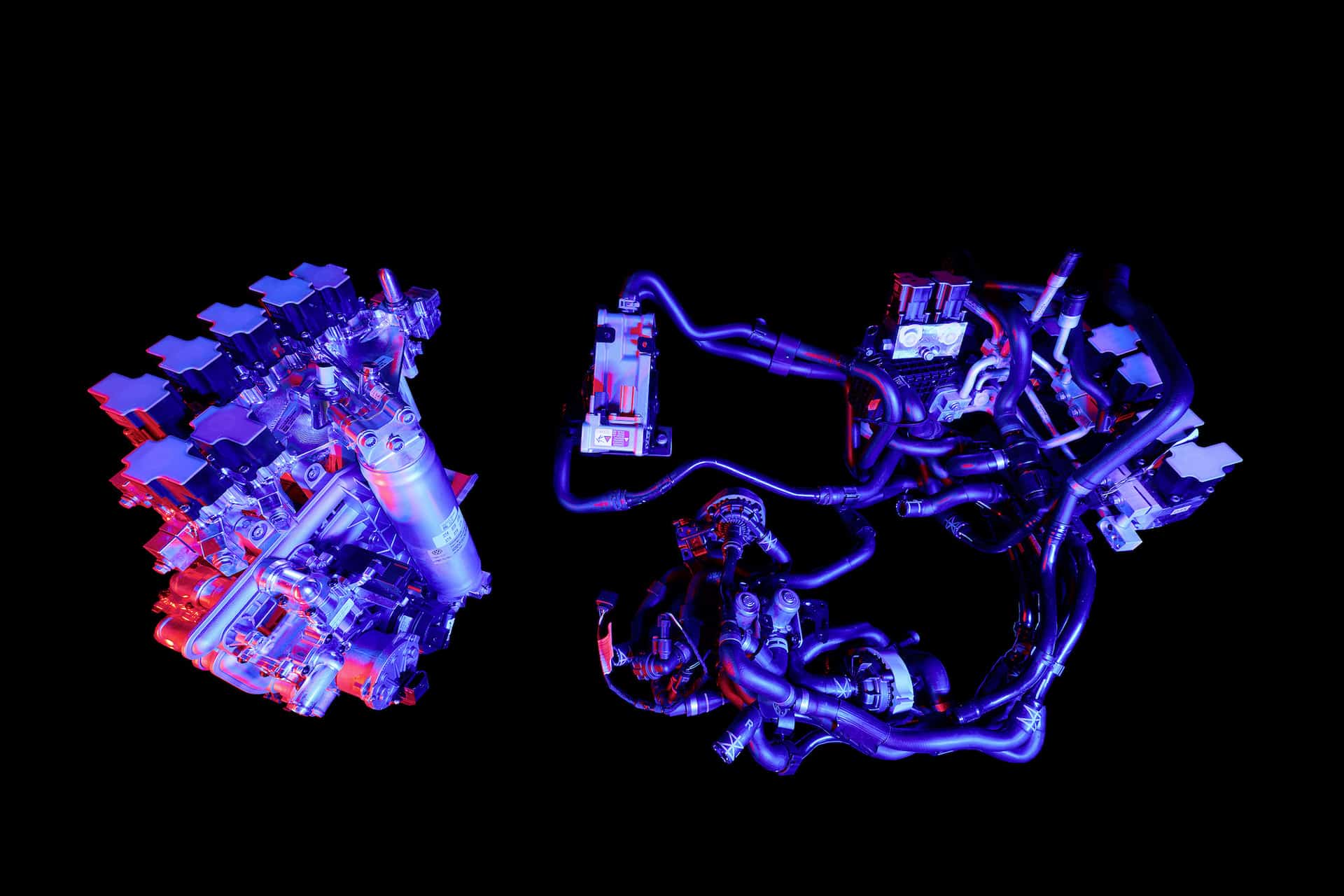

- Enabled FSD Beta on highway. This unifies the vision and planning stack on and off-highway and replaces the legacy highway stack, which is over four years old. The legacy highway stack still relies on several single-camera and single-frame networks, and was setup to handle simple lane-specific maneuvers. FSD Beta’s multi-camera video networks and next-gen planner, that allows for more complex agent interactions with less reliance on lanes, make way for adding more intelligent behaviors, smoother control and better decision making.

- Added voice drive-notes. After an intervention, you can now send Tesla an anonymous voice message describing your experience to help improve Autopilot.

- Expanded Automatic Emergency Braking (AEB) to handle vehicles that cross ego’s path. This includes cases where other vehicles run their red light or turn across ego’s path, stealing the right-of-way.

- Replay of previous collisions of this type suggests that 49% of the events would be mitigated by the new behavior. This improvement is now active in both manual driving and autopilot operation.

- Improved autopilot reaction time to red light runners and stop sign runners by 500ms, by increased reliance on object’s instantaneous kinematics along with trajectory estimates.

- Added a long-range highway lanes network to enable earlier response to blocked lanes and high curvature.

- Reduced goal pose prediction error for candidate trajectory neural network by 40% and reduced runtime by 3X. This was achieved by improving the dataset using heavier and more robust offline optimization, increasing the size of this improved dataset by 4X, and implementing a better architecture and feature space.

- Improved occupancy network detections by oversampling on 180K challenging videos including rain reflections, road debris, and high curvature.

- Improved recall for close-by cut-in cases by 20% by adding 40k autolabeled fleet clips of this scenario to the dataset. Also improved handling of cut-in cases by improved modeling of their motion into ego’s lane, leveraging the same for smoother lateral and longitudinal control for cut-in objects.

- Added “lane guidance module and perceptual loss to the Road Edges and Lines network, improving the absolute recall of lines by 6% and the absolute recall of road edges by 7%.

- Improved overall geometry and stability of lane predictions by updating the “lane guidance” module representation with information relevant to predicting crossing and oncoming lanes.

- Improved handling through high speed and high curvature scenarios by offsetting towards inner lane lines.

- Improved lane changes, including: earlier detection and handling for simultaneous lane changes, better gap selection when approaching deadlines, better integration between speed-based and nav-based lane change decisions and more differentiation between the FSD driving profiles with respect to speed lane changes.

- Improved longitudinal control response smoothness when following lead vehicles by better modeling the possible effect of lead vehicles’ brake lights on their future speed profiles.

- Improved detection of rare objects by 18% and reduced the depth error to large trucks by 9%, primarily from migrating to more densely supervised autolabeled datasets.

- Improved semantic detections for school busses by 12% and vehicles transitioning from stationary-to-driving by 15%. This was achieved by improving dataset label accuracy and increasing dataset size by 5%.

- Improved decision making at crosswalks by leveraging neural network based ego trajectory estimation in place of approximated kinematic models.

- Improved reliability and smoothness of merge control, by deprecating legacy merge region tasks in favor of merge topologies derived from vector lanes.

- Unlocked longer fleet telemetry clips (by up to 26%) by balancing compressed IPC buffers and optimized write scheduling across twin SOCs.